4 Bits Are Enough

Peter AJ van der Made

Traditional convolutional neural networks (CNNs) use 32-bit floating point parameters and activations. They require extensive computing and memory resources. Early convolutional neural networks such as AlexNet had 62 million parameters.

Over time, CNNs have increased in size and capabilities. GPT3 is a transformer network that has 175 billion parameters. The most significant AI training runs have increased exponentially, with an average doubling period of 3.4-months. Millions or billions of Multiply and Accumulate (MAC) functions must be executed for each inference. These operations are performed in batches of data on large servers with stacks of Graphics Processing Units (GPU) or costly cloud services, and these requirements keep accelerating.

At the other end, the increasing popularity of Deep Learning networks in small electronic devices demands energy-efficient, low-latency solutions of, sometimes, similar models. Deep Learning networks are seen in smartphones, industrial IoT, home appliances, and security devices. Many of these devices are subject to stringent power and security requirements. Security issues can be mitigated by eliminating the uploading of raw data to the internet and performing all or most of the processing on the device itself. However, given the constraints at the edge, models running for these devices must be much more compact in every dimension, without compromising accuracy.

A new architectural approach such as event-based processing with at-memory compute is fundamental to addressing the efficiency challenge. These draw inspiration from neuromorphic principles, mimicking the brain to minimize operations and hence energy consumption. However, energy efficiency is the cumulative effect of not just the architecture, but model size including width of weights and activation parameters. In particular, support for 32-bit floating point requires complex and large-footprint hardware. The reduction in size of these parameters and weights can provide a substantial benefit in performance and in reducing the hardware needed to compute. However, this must be judiciously and innovatively done to keep the outcomes and accuracy similar to the larger models. With the process of quantization, activation parameters and weights can be converted to low bit-width values. Several sources have reported that lower precision computation can provide similar classification accuracy at lower power consumption and better latency. This enables smaller footprint hardware implementation, that reduces development, silicon, and packaging cost, enabling on-device processing in handheld, portable, and edge devices.

To make the development process easier, Brainchip has developed the MetaTF™ software that integrates with TensorFlow ™ (and other edge AI development flows), including APIs for 4-bit processing and quantization functionality, to enable retraining and optimization.

The developers can therefore seamlessly build and optimize for the Akida Neural Processor and benefit from executing neural networks entirely on-chip, efficiently, with low latency.

Quantization is the process of mapping continuous infinite values to discrete finite values. Or in the case of modern AI, mapping larger floating-point values to a discrete set of smaller real numbers. The quantization method obtains an efficient representation, manipulation, and communication of numeric values in Machine Learning (ML) applications. 32-bit floating point numbers are distributed over a discrete set of real numbers (0 to 15) to minimize the number of bits required while maintaining the accuracy of the classification. Remarkable performance is achieved in 4-bit quantized models for diverse tasks such as object classification, face recognition, segmentation, object detection, and keyword recognition.

The Brainchip Akida neural processor performs all the operations needed to execute a low bit-width Convolutional Neural Network, thereby offloading the entire task from the central processor or microcontroller. The design is optimized for high-performance Machine Learning applications, resulting in efficient, low power consumption while performing thousands of operations simultaneously on each phase of the 300 MHz clock cycle. A unique feature of the Akida neural processor is the ability to learn in real time, allowing products to be conveniently configured in the field without cloud access. The technology is available as a chip or a small IP block to integrate into an ASIC.

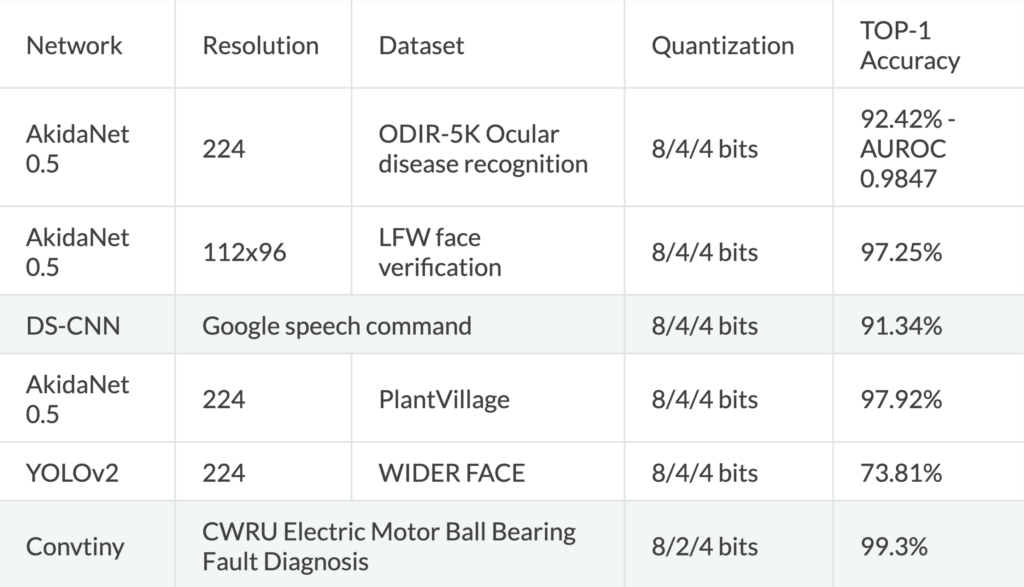

“Table 1 provides the accuracy of several 4-bit CNN networks comparable to floating-point accuracies. For example, AkidaNet is a version of Mobilnet optimized for 4-bit classification, and many other example networks can be downloaded from the Brainchip website. In the quantization column below, ‘a’/’b’/’c’ where ‘a’ means weights bits for first layer, ‘b’ means weights bits for subsequent layers, ‘c’ means output activation map bits for every layer.

Table 1. Accuracy of inference.

AkidaNet is a feed-forward network optimized to work with 4-bit weights and activations. AkidaNet 0.5 has half the parameters of AkidaNet 1.0. The Akida hardware supports Yolo, DeviceNet, VGG, and other feed-forward networks. Recurrent networks and transformer networks are supported with minimal CPU participation. An example recurrent network implemented on the AKD1000 chip required just 3% CPU participation with 97% of the network running on Akida.

4-bit network resolution is not unique. Brainchip pioneered this Machine Learning technology as early as 2015 and, through multiple silicon implementations, tested and delivered a commercial offering to the market. Others have recently published papers on its advantages, such as IBM, Stanford University and MIT.

Akida is based on a neuromorphic, event-based, fully digital design with additional convolutional features. The combination of spiking, event-based neurons, and convolutional functions is unique. It offers many advantages, including on-chip learning, small size, sparsity, and power consumption in the microwatt/milliwatt ranges. The underlying technology is not the usual matrix multiplier, but up to a million digital neurons with either 1, 2, or 4-bit synapses. Akida’s extremely efficient event-based neural processor IP is commercially available as a device (AKD1000) and as an IP offering that can be integrated into partner System on Chips (SoC). The hardware can be configured through the MetaTF software, integrated into TensorFlow layers equating up to 5 million filters, thereby simplifying model development, tuning and optimization through popular development platforms like TensorFlow/Keras and Edge Impulse. There are a fast-growing number of models available through the Akida model zoo and the Brainchip ecosystem.

To dive a little bit deeper into the value of 4-bit, in its 2020 NeurIPS paper IBM described the various pieces that are already present and how they come together. They prove the readiness and the benefit through several experiments simulating 4-bit training for a variety of deep-learning models in computer vision, speech, and natural language processing. The results show a minimal loss of accuracy in the models’ overall performance compared with 16-bit deep learning. The results are also more than seven times faster and seven times more energy efficient. And Boris Murmann, a professor at Stanford who was not involved in the research, calls the results exciting. “This advancement opens the door for training in resource-constrained environments,” he says. It would not necessarily make new applications possible, but it would make existing ones faster and less battery-draining“ by a good margin.”

With the focus on edge AI solutions that are extremely energy-sensitive and thermally constrained and require efficient real-time response, this advantage of 4-bit weights and activations is compelling and shows a strong trend in the coming years. Brainchip has pioneered this path since 2016 and invested in a simplified flow and ecosystem to enable developers. BrainChip’s MetaTF compilation and tooling are integrated into TensorFlow™ and Edge Impulse. TensorFlow/Keras is a familiar environment to most data scientists, while Edge Impulse is a strong emerging platform for Edge AI and TinyML. MetaTF, many application examples, and source code are available free from the Brainchip website: https://doc.brainchipinc.com/examples/index.html

Brainchip continues to invest in advanced machine-learning technologies to further its market leadership.

Source: IBM NeurIPS proceedings 2020: https://proceedings.neurips.cc/paper/2020/file/13b919438259814cd5be8cb45877d577-Paper.pdf

Source: MIT Technology Review. https://www.technologyreview.com/2020/12/11/1014102/ai-trains-on-4-bit-computers/