Designing Smarter and Safer Cars with Essential AI

Conventional AI silicon and cloud-centric inference models do not perform efficiently at the automotive edge. As many semiconductor companies have already realized, latency and power are two primary issues that must be effectively addressed before the automotive industry can manufacture a new generation of smarter and safer cars. To meet consumer expectations, these vehicles need to feature highly personalized and responsive in-cabin systems while supporting advanced assisted driving capabilities.

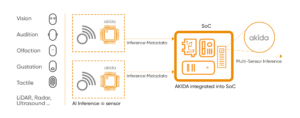

That’s why automotive companies are untethering edge AI functions from the cloud – and performing distributed inference computation on local neuromorphic silicon using BrainChip’s AKIDA. This Essential AI model sequentially leverages multiple AKIDA-powered smart sensors and AKIDA AI SoC accelerators to efficiently capture and analyze inference data within designated regions of interest or ROI (samples within a data set that provide value for inference).

In-Cabin Experience

According to McKinsey analysts, the in-cabin experience is poised to become one of the most important differentiators for new car buyers. With AKIDA, automotive companies are designing lighter, faster, and more energy efficient in-cabin systems that can act independent of the cloud. These include advanced facial detection and customization systems that automatically adjust seats, mirrors, infotainment settings, and interior temperatures to match driver preferences.

AKIDA also enables sophisticated voice control technology that instantly responds to commands – as well as gaze estimation and emotion classification systems that proactively prompt drivers to focus on the road. Indeed, the Mercedes-Benz Vision EQXX features AKIDA-powered neuromorphic AI voice control technology which is five to ten times more efficient than conventional systems.

Neuromorphic silicon – which processes data with efficiency, precision, and economy of energy – is playing a major role in transforming vehicles into transportation capsules with personalized features and applications to accommodate both work and entertainment. This evolution is driven by smart sensors capturing yottabytes of data from the automotive edge to create holistic and immersive in-cabin experiences.

Assisted Driving – Computer Vision

In addition to redefining the in-cabin experience, AKIDA allows advanced driver assistance systems (ADAS) such as computer vision to detect vehicles, pedestrians, bicyclists, signs, and objects with incredibly high levels of precision.

Specifically, pairing two-stage object detection inference algorithms with local AKIDA silicon enables computer vision systems to efficiently perform processing in two primary stages – at the sensor (inference) and AI accelerator (classification). Using this paradigm, computer vision systems rapidly and efficiently analyze vast amounts of inference data within specific ROIs.

Intelligently refining inference data eliminates the need for compute heavy hardware such as general-purpose CPUs and GPUs that draw considerable amounts of power and increase the size and weight of computer vision systems. It also allows ADAS to generate incredibly detailed real-time 3D maps that help drivers safely navigate busy roads and highways.

Assisted Driving – LiDAR

Automotive companies are also leveraging a sequential computation model with AKIDA-powered smart sensors and AI accelerators to enable the design of new LiDAR systems. This is because most LiDAR typically rely on general-purpose GPUs – and run cloud-centric, compute-heavy inference models that demand a large carbon footprint to process enormous amounts of data.

Indeed, LiDAR sensors typically fire 8 to 108 laser beams in a series of cyclical pulses, each emitting billions of photons per second. These beams bounce off objects and are analyzed to identify and classify vehicles, pedestrians, animals, and street signs. With AKIDA, LiDAR processes millions of data points simultaneously, using only minimal amounts of compute power to accurately detect – and classify – moving and stationary objects with equal levels of precision.

Limiting inference to a ROI helps automotive manufacturers eliminate compute and energy heavy hardware such as general-purpose CPUs and GPUs in LiDAR systems – and accelerate the rollout of advanced assisted driving capabilities.

Essential AI

Scalable AKIDA-powered smart sensors bring common sense to the processing of automotive data – freeing ADAS and in-cabin systems to do more with less by allowing them to infer the big picture from the basics. With AKIDA, automotive manufacturers are designing sophisticated edge AI systems that deliver immersive end-user experiences, support the ever-increasing data and compute requirements of assisted driving capabilities, and enable the self-driving cars and trucks of the future.

To learn more about how BrainChip brings Essential AI to the next generation of smarter cars, download our new white paper here.